Table of Contents

Google’s new TPU 8t and 8i chips deliver massive scale for AI training and inference

In sum – what we know:

- Specialized silicon split – Google is continuing its strategy of bifurcating hardware, releasing the TPU 8t specifically for massive frontier model training and the TPU 8i for high-speed inference.

- Extreme scaling potential – The new Virgo Network topology is designed to enable near-linear scaling across clusters of up to 1 million chips, potentially reducing training times from months to weeks.

- Efficiency and cost gains – The new generation claims a 2x improvement in performance per watt and up to an 80% gain in performance-per-dollar for inference tasks compared to previous hardware.

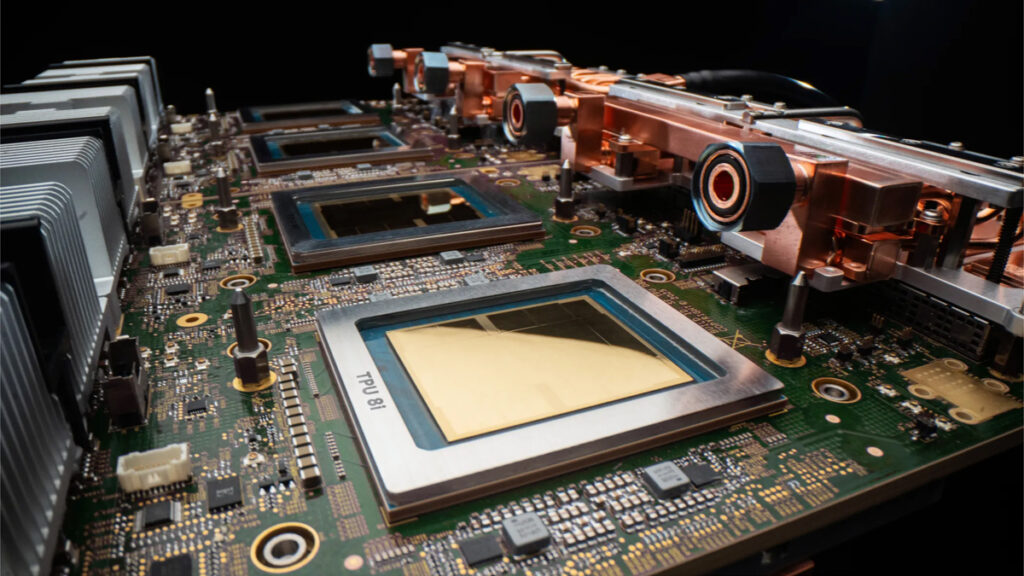

Google is running full steam ahead with its AI silicon. The company has taken the wraps off of its latest-generation TPU chips. The TPU 8t is purpose-built for training, while the TPU 8i targets inference. It’s a continuation of Google’s aggressive AI hardware play, though certainly not surprising to see.

Google has taken a split approach with separate training and inference chips before, but it hasn’t quite always launched them at the same time. Google’s 6th-generation Trillium chip was a training chip, with the 7th-gen Ironwood TPU was designed for inference.

TPU 8t

The TPU 8t is aimed squarely at the massive and still-growing computational appetite of pre-training frontier AI models. A single TPU 8t superpod scales out to 9,600 chips sharing 2 petabytes of high-bandwidth memory, delivering 121 exaflops of compute. Google says that kind of power can compress frontier model development from months down to weeks. We’ll have to wait and see if that turns out to be accurate though.

Under the hood, inter-chip bandwidth has doubled relative to the prior generation, which is a critical upgrade for the communication-heavy patterns inherent to distributed training. Google has also baked in TPUDirect, claiming 10x faster storage access — going after one of the most persistent pain points in large-scale training runs. But the headline feature might be the new Virgo Network topology, a hierarchical networking architecture designed to enable near-linear scaling across up to 1 million chips in a single logical cluster. If that scaling behavior actually materializes in production, it would be a genuinely remarkable achievement in orchestrating massive training clusters without the usual catastrophic efficiency degradation.

On the software front, the TPU 8t is deeply integrated with JAX and Google’s Pathways framework, both purpose-built to make scaling across enormous chip arrays more tractable. This kind of hardware-software co-design is one of Google’s core strengths as a vertically integrated provider — though it does mean customers are buying into a more opinionated stack than the relative openness of Nvidia’s CUDA ecosystem.

TPU 8i

The TPU 8i is engineered for inference, and specifically the complex reasoning loops and multi-turn planning that define the emerging wave of AI agents. Each chip ships with 288 GB of high-bandwidth memory and 384 MB of on-chip SRAM, tripling the SRAM of the previous generation. That SRAM increase helps ensure that the model’s working data is kept physically close to the processor, which helps cut down on latency penalties that pile up every time compute has to reach out to slower memory tiers.

TPU 8i pods scale to 1,152 chips producing 11.6 exaflops — a big step up from Ironwood’s 256-chip pods at 1.2 exaflops. Google credits part of this jump to what it describes as a “memory wall” breakthrough architecture, specifically designed to eliminate processor idle time by ensuring data flows to compute cores without interruption. The chips sit alongside custom Arm-based Axion CPU hosts, and Google has doubled the physical CPU hosts per server compared to last generation — targeting the data preparation bottlenecks that can strangle inference throughput in real production environments.

The surrounding infrastructure reflects hard-won lessons from running these workloads at scale. Google is deploying non-uniform memory architecture (NUMA) optimizations and fourth-generation liquid cooling to manage the power densities involved. The bottom line, according to Google, is that organizations can serve nearly double the customer volume at the same cost.

Performance

Google’s performance numbers for both chips are impressive on paper, though, of course, vendor-reported benchmarks don’t always map cleanly onto real-world workloads. On the training side, the TPU 8t reportedly delivers a 2.7 to 2.8x improvement in price-to-performance over the prior generation. For inference, the TPU 8i claims an 80% gain in performance-per-dollar compared to its predecessor.

Across both chips, Google says the new generation achieves 2x better performance per watt relative to Ironwood, which is a meaningful improvement as energy costs and power availability become increasingly central bottlenecks in AI infrastructure buildouts.

Google’s big chip push

Google has increasingly pushed into what has previously been largely Nvidia’s territory. The customer list proves that. OpenAI, Anthropic, and Meta have all confirmed commitments, reportedly locking in multi-gigawatt capacity allocations on the new hardware.

That said, CUDA’s massive developer network creates substantial switching costs that don’t evaporate overnight. Google’s vertical integration cuts both ways, enabling tight hardware-software optimization, but also locking customers into Google’s stack in ways that constrain flexibility.