Table of Contents

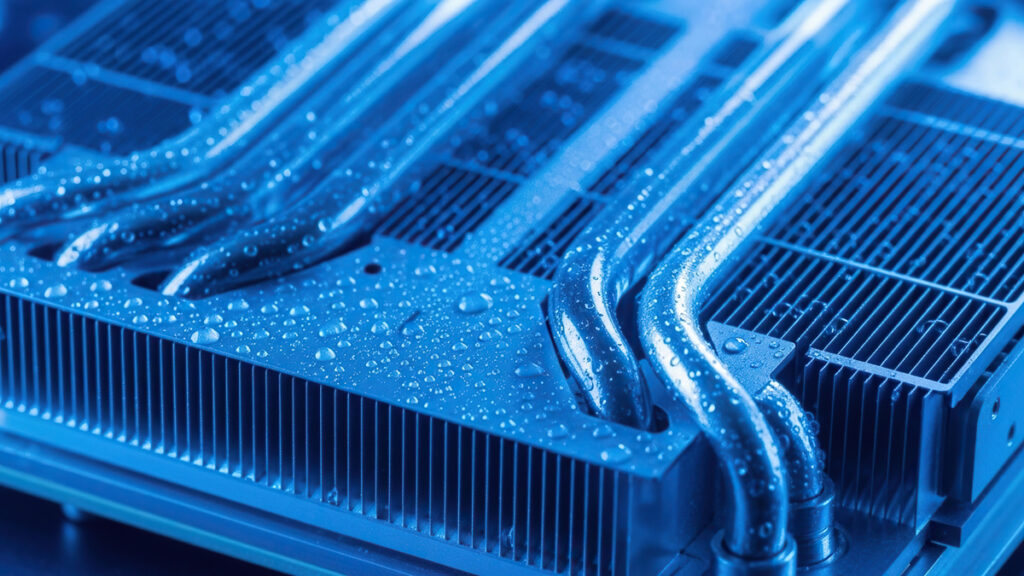

Why AI’s thermal wall is making liquid cooling mandatory

The AI hardware arms race has other problems than just raw compute — like heat. GPU makers are pushing thermal design power ratings past 1,000 watts and increasing watts per chip, and the data center industry is looking at a cooling crisis that fans might not be able to fix.

Next-gen silicon is demanding far higher cooling than the current generation, and that might mean coming up with some new cooling techniques. For the current buildout of data centers, liquid cooling might not be optional. But even liquid cooling brings its own set of engineering headaches, energy trade-offs, and supply chain question marks that the industry is still sorting out.

The physics of heat limits

Air cooling in data centers eventually hits a wall, and while some studies claim it can reach 1kW per chip, there are some serious limits at around 700W per chip TDP. Past that point, throwing more fans at the problem yields diminishing returns — not because fan technology has stagnated, but because of fundamental physical limits on how much heat air can actually absorb and transport away inside the tight confines of a server rack. Compared to liquids, air has poor thermal conductivity and low heat capacity. There’s only so much you can force through densely packed hardware before turbulence, backpressure, and noise start working against you.

That’s worth emphasizing. The ceiling here is a physical boundary — and not an engineering optimization problem. You can design a better fan, build cleverer airflow baffles, or space components further apart. But none of that changes the thermodynamic limits of air.

Escalating power demands

NVIDIA’s H100 GPUs dominated AI training for some time at 700W TDP, pushing average rack densities to around 17 kW. That already had traditional air-cooling systems sweating in a lot of facilities. Manageable, sure, but only barely, and only with careful airflow engineering and hot/cold aisle containment.

But the next generation is rolling out and it demands even more power. Modern AI racks now run at 40 to over 100 kW, with some deployments going well past that. For a sense of scale, a typical 50 kW rack throws off heat equivalent to 10 to 25 kitchen ovens running at once, all crammed into a space about the size of a refrigerator.

The density makes everything worse. That extreme heat concentration creates localized hotspots—zones of intense thermal density that risk hardware degradation, performance throttling, and outright equipment failure if they aren’t managed with precision. And here’s the paradox: higher-density AI servers get packed tighter to handle greater computational demands, which leaves less physical room for cooling infrastructure. The denser the rack, the more sophisticated and space-efficient your cooling needs to be—yet there’s less space to actually put it.

The pressure drop

Liquid cooling solves the air problem, but it introduces its own engineering headache — the pressure drop. That’s the resistance coolant encounters as it moves through the system. Pressure drop directly affects system reliability, component longevity, and what it costs to keep everything running.

Flow resistance shows up at multiple points throughout a liquid cooling system. Cold plate internal channels, whose geometry varies depending on chip design, generate friction. Long hose runs and too many 90-degree bends create turbulence that chokes flow. Every fitting, gasket, and manifold junction piles on more resistance. Even the coolant itself plays a role — higher viscosity means more friction through narrow passages.

When pressure drop gets out of hand, the consequences stack up fast. Pumps have to work harder to keep flow rates where they need to be, burning more energy and driving up operating costs. If flow rates still drop despite that extra pump effort, you get reduced coolant mass flow, thermal spikes, and localized component failures. Sustained high-pressure operation accelerates equipment wear and pushes systems toward premature breakdown. In refrigerant-based setups, too much pressure drop can trigger vapor formation that kills cooling capacity entirely. And in high-density racks, maybe the most dangerous outcome is uneven cooling distribution — one component running hot can cascade into failures across neighboring hardware.

Design solutions and optimization

The liquid cooling industry has already developed several practical ways to tackle the pressure drop problem, and many are running in production environments today. The single most impactful design decision is choosing parallel flow configurations over series arrangements. In a parallel setup, coolant gets distributed evenly across multiple components at the same time instead of flowing sequentially from one to the next. That cuts the total resistance any individual flow path faces and delivers much more uniform cooling across the rack.

Beyond flow topology, thoughtful physical routing makes a measurable difference. Minimizing 90-degree bends and keeping pipe runs as short as possible reduces the turbulence that eats into flow efficiency. Even one unnecessary bend creates measurable pressure loss — pipe routing and component placement have to be intentional, not an afterthought. On the fluid side, low-viscosity coolants reduce friction resistance throughout the system, though coolant choice involves its own trade-offs around material compatibility and thermal performance.

At the component level, specialized high-flow quick-disconnect fittings with optimized internal geometries help keep flow rates healthy at connection points that would otherwise become bottlenecks. Engineered manifolds with parallel flow paths balance coolant load across servers, preventing the uneven distribution that spawns hotspots. None of these solutions are groundbreaking in isolation, but taken together, they can be the difference between a liquid cooling system that actually works reliably at scale and one that creates more headaches than it solves.

Competing cooling technologies

The cooling technology landscape for AI infrastructure has settled into a rough hierarchy, with each tier targeting progressively higher power densities. Air cooling is effectively dying for high-density AI workloads. Single-phase liquid cooling has taken the lead as the most commercially proven approach, circulating coolant through cold plates mounted directly to chips. It handles TDP ranges up to around 2,700W and has the most mature supply chain and real-world deployment track record.

Two-phase liquid cooling and immersion cooling are the next step. These technologies offer potentially superior heat transfer efficiency by leveraging phase-change thermodynamics — liquid absorbing heat as it transitions to vapor. In theory, that means handling even greater thermal loads with less coolant volume.

Immersion systems carry particular risks around fluid compatibility and leaks compared to cold plate approaches. Submerging entire servers in dielectric fluid introduces complications around maintenance, component servicing, and long-term material degradation that cold plates simply don’t have. There’s also a hybrid category gaining traction — liquid-air systems that apply liquid cooling selectively where thermal loads peak while relying on air for lower-power components. It’s a pragmatic middle ground, though it adds design complexity. For infrastructure planners making decisions right now, single-phase liquid cooling is the safest bet for most high-density AI deployments. Two-phase and immersion technologies are worth keeping an eye on, but they aren’t ready for widespread production reliance yet.

The energy cost

Ironically enough, these systems are themselves energy-intensive. Liquid cooling solves the physics problem of dissipating heat at extreme densities, but it shifts a meaningful chunk of that energy burden over to facility-level power consumption. A data center rolling out liquid cooling across hundreds of high-power racks doesn’t eliminate its energy footprint — it redistributes it, and frequently increases it.

Chillers, pumps, and coolant circulation infrastructure all draw substantial power. A facility-grade chiller plant capable of rejecting heat from hundreds of kilowatts of AI compute is itself a major electrical load. Pump energy scales with system size and pressure drop — so poorly designed systems with high pressure drop waste electricity forcing coolant through inefficient paths. Coolant circulation losses compound, and overall facility-level power density tends to climb once liquid cooling infrastructure enters the equation.

The upshot is that the cooling crisis can’t be solved through thermal engineering alone. It demands grid infrastructure upgrades to deliver the power these facilities need, renewable energy sourcing to offset increased consumption, or strategic geographic placement near regions with both adequate power supply and favorable ambient conditions for heat rejection. Dropping a liquid-cooled AI facility in a hot, grid-constrained region compounds every single one of these problems at once.

Infrastructure planning

For infrastructure planners, the specifics of liquid cooling system design directly determine whether a deployment runs reliably or turns into a maintenance nightmare. Manifold geometry is one of the most consequential and most overlooked design decisions out there. Poor manifold design creates flow bottlenecks that undercut the entire cooling system’s effectiveness, no matter how well everything else is engineered. Getting this right takes deliberate engineering work, not off-the-shelf parts.

Coolant selection and component placement deserve equal attention. Viscosity, material compatibility, and thermal performance characteristics all factor into coolant choice, and getting it wrong affects both thermal efficiency and long-term component lifespan. Cold plate design is chip-specific — there’s no universal solution, which means cooling infrastructure has to be planned hand-in-hand with hardware procurement decisions. Pipe routing needs to be intentional, with every bend and run length justified, because each unnecessary pressure loss point chips away at system performance.

The reliability payoff for nailing these decisions is significant. Systems with lower pressure drop tend to need less frequent maintenance, see fewer cascading failures in densely packed racks, and last longer overall by reducing pump strain. That said, the supply chain for liquid cooling components remains uneven. Some parts are available at scale without issue, while others face lead times and quality variability that can throw off deployment timelines. Two-phase and immersion technologies haven’t been standardized or widely deployed at hyperscale yet, and regulatory frameworks around coolant handling and environmental impact are still taking shape. Infrastructure planners building for the next wave of AI chips should treat liquid cooling as the baseline assumption, but they should also build in enough flexibility for a technology landscape that’s still shifting fast.