Table of Contents

Operators are rapidly shifting from traditional air cooling to advanced liquid-to-air distribution systems

Data centers are going through a massive shift. Facilities that used to measure capacity in megawatts are now pushing toward gigawatt territory, driven by the relentless compute demands of AI training, inference workloads, and cloud expansion. The result? More servers, but also a whole lot more heat.

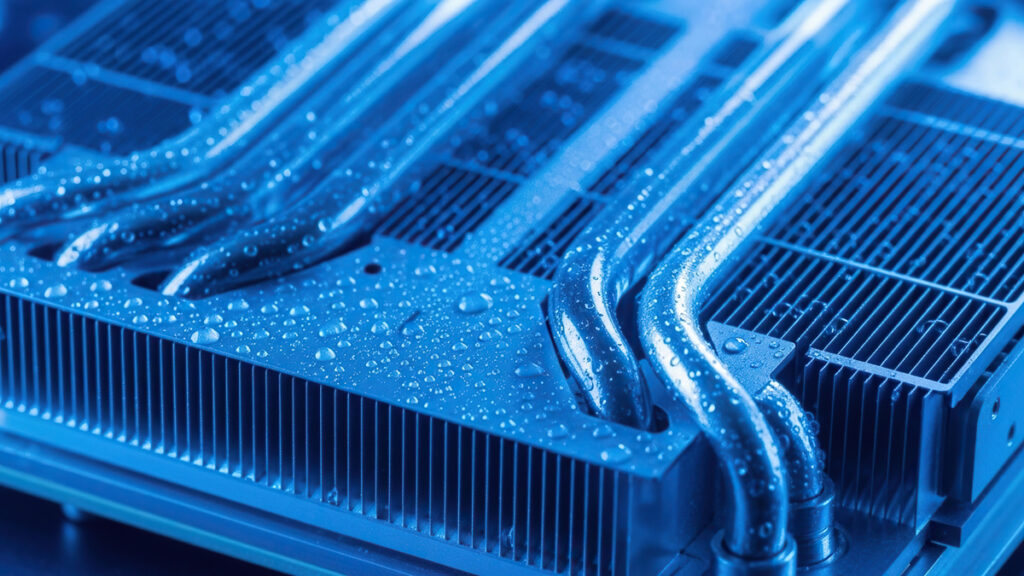

At the center of this challenge sits the procurement of liquid-to-air coolant distribution units, or CDUs. These are the systems that use liquid to pull heat directly off chips, then rely on air to dump that thermal energy into the surrounding environment. As rack densities climb beyond what traditional air cooling can handle, demand for these units has surged — and actually getting your hands on them has turned into a significant bottlenecks. Vertiv, LG, Asetek, ZutaCore are all expanding their CDU product lines, but supply simply hasn’t kept pace with the rate of new construction and retrofits.

The underlying technology here isn’t particularly novel. Liquid cooling has been standard in high-performance computing and industrial settings for decades. What’s changed is the scale and urgency. Deploying liquid-to-air CDUs across dense AI environments, where thousands of GPUs are generating enormous thermal loads in tightly packed configurations, represents a fundamentally different use case than anything the industry has dealt with before.

Physical limitations of air and the transition to liquid cooling

Air cooling hits a wall at around 41.3kW per rack. Past that threshold, the sheer volume of air you’d need to move enough heat becomes impractical to deliver through conventional duct and fan systems. For years, this wasn’t a huge issue — traditional racks rarely pushed past 20kW. But the current generation of AI hardware has blown right through that ceiling.

Modern GPUs generate upwards of 700 watts per chip. Fill a rack with these processors and you’re looking at power densities of 50kW, 100kW, or more. The thermal load these systems produce simply cannot be addressed by moving air over heat sinks, no matter how many fans you throw at it.

Liquid cooling helps solve that. Liquid conducts much more heat than air while using less energy to do it. In practical terms, air-cooled systems typically deliver around 20 to 30 teraflops per square foot, while liquid-cooled configurations enable 100 to 150 teraflops per square foot — roughly a 5x improvement in compute density. For operators trying to squeeze maximum return out of increasingly expensive real estate and power infrastructure, that’s a big deal. Traditional facilities are transitioning from supporting 20kW-plus rack power requirements to 50kW or more, and liquid cooling helps makes that jump possible.

How liquid-to-air systems operate

The coolant distribution unit sits at the heart of any liquid-to-air cooling setup. It manages circulation of coolant through the entire system, typically incorporating redundant pumps for reliability and leak detection for safety. Think of the CDU as the central hub keeping fluid moving between server hardware and heat rejection equipment.

Direct-to-chip liquid cooling is the most common approach today. Cold plates mount directly onto CPUs and GPUs, with coolant circulating through them at temperatures between 15 degrees and 30 degrees celsius. This handles roughly 70 to 80 percent of a server’s heat output, while traditional fans take care of the remaining thermal load from memory, storage, and other components. It’s a targeted strategy — liquid goes where the heat is most intense, air handles everything else.

Single-phase direct-to-chip cooling currently commands a 47 percent market share, and for good reason. It requires minimal modifications to existing server designs, making it viable for retrofit installations where operators don’t want to rip out and replace their entire hardware fleet. The coolant stays liquid throughout the loop, which keeps things relatively straightforward to operate and maintain.

Two-phase direct-to-chip cooling takes a fundamentally different approach. Here, the coolant actually boils at the chip surface — typically around 50 degrees celsius — and that phase change from liquid to vapor carries away significantly more thermal energy through the latent heat of vaporization. Heat removal performance is superior, but you pay for it in system complexity. Managing vapor return, dealing with specialized refrigerants, and absorbing the higher costs of two-phase infrastructure have kept adoption limited for now.

Whether the system runs single-phase or two-phase, the final step is the same: heat exchangers transfer thermal energy from the primary liquid cooling loop to a secondary loop, which ultimately ejects that heat into the surrounding environment — typically through air-cooled radiators or dry coolers positioned outside the facility.

Current deployment standards and hybrid models

Pure liquid cooling gets the headlines, but the reality in production is more nuanced. Hybrid architectures combining air and liquid systems are the practical deployment standard across the industry right now. These setups let operators repurpose existing air-cooling infrastructure while selectively layering in liquid cooling where it’s needed most — avoiding the disruption and cost of a full facility overhaul.

The hybrid model also provides built-in redundancy. If one system encounters issues — a pump failure in the liquid loop, say, or a fan array going down — the unaffected system keeps operating and holds hardware within safe thermal limits.

In practice, the decision comes down to rack density. Racks pulling under 15kW are still perfectly well served by conventional air cooling — it’s cost-effective, proven, and simple. Once you push higher than that, operators typically augment with rear-door heat exchangers, extending the useful life of existing air infrastructure without major investment. At 40 to 100kW per rack, direct-to-chip liquid cooling becomes the go-to solution, offering the right balance of proven performance and manageable complexity. And for the most extreme deployments — racks exceeding 100kW — single-phase immersion cooling is generally required to handle the thermal load.

This tiered split means most modern data centers aren’t choosing between air and liquid. They’re running both, deploying each technology where it makes the most economic and thermal sense.

Efficiency, costs, and regulatory pushes

The efficiency gains from liquid cooling are substantial. These systems routinely achieve a power usage effectiveness (PUE) of 1.05 to 1.15, meaning for every watt spent on computing, only a fraction of a watt goes to cooling overhead. Traditional air-cooled facilities typically land between 1.4 and 1.8 PUE — a significant energy penalty that compounds rapidly at scale.

That said, air cooling shouldn’t be written off entirely. Well-deployed air systems using hot aisle/cold aisle containment can cut cooling energy consumption by 20 to 30 percent and remain competitive at lower densities. Google, for instance, has achieved a PUE of 1.10 using advanced air cooling alone. The problem is that as rack densities climb, the gap between what air can deliver and what liquid achieves only widens.

On the cost front, retrofit liquid cooling installations run approximately $2 to $3 million per megawatt — actually 20 to 30 percent cheaper than comparable air upgrade alternatives. Upfront capital expenditure for liquid cooling is higher when building from scratch, but that investment gets offset over time by reduced operating costs and the ability to pack significantly more compute into the same footprint. For operators running expensive AI hardware, getting more teraflops per square foot can dramatically change facility economics.

Sealed liquid cooling systems also offer an operational advantage that’s easy to overlook. They’re largely immune to external environmental conditions. A facility running liquid cooling maintains consistent performance during extreme heat, humidity, or even sandstorms — conditions that can degrade or entirely shut down air-cooled operations.

Key manufacturers

The liquid cooling market is projected to approach $2 billion by 2027, growing at roughly a 60 percent compound annual growth rate from 2020 to 2027. That’s an extraordinary pace, driven primarily by the surge in cloud services and AI workloads, with blockchain and cryptocurrency applications contributing to a lesser extent.

Major enterprise deployments are already underway. Microsoft began fleet deployment of liquid cooling across its Azure campuses in mid-2025 and is testing microfluidic cooling for future hardware generations. Colovore has secured a $925 million facility designed to offer up to 200kW per rack — a density that would be unthinkable without liquid cooling infrastructure backing it.

On the supplier side, several key manufacturers are scaling their liquid-to-air CDU offerings to meet demand. Vertiv is broadening liquid cooling capabilities across its product portfolio. LG has announced new CDU and cold plate solutions targeting high-density computing environments specifically. Asetek’s InRackCDU system supports up to 80kW per rack with redundant pumps and leak detection built in. And ZutaCore is pushing the performance envelope with waterless direct liquid cooling systems.

The market is growing fast, but demand is growing faster. As data centers continue their march from megawatt to gigawatt capacities, the ability to source and deploy liquid-to-air CDUs at the pace required remains a challenging logistical problem.